A few months ago, while meeting with an AI executive in San Francisco, I noticed a strange sticker on his laptop. The sticker depicted a cartoon of a menacing octopus-like creature with many eyes and a yellow smiley face attached to one of its tentacles. I asked what it was.

“Oh, that’s the Shoggoth,” he explained. “It’s the most important meme in AI”

And with that, our agenda officially derailed. Forget chatbots and compute clusters – I needed to know all about the Shoggoth, what it meant and why people in the AI world were talking about it.

The director explained that the Shoggoth had become a popular reference among artificial intelligence workers, as a vivid visual metaphor for how a large language model (the type of AI system that powers ChatGPT and other chatbots) actually works.

But it was only partly a joke, he said, because it also hinted at the concerns many researchers and engineers have about the tools they build.

Since then, the Shoggoth has gone viral, or as viral as it gets in the small world of hyper-online AI insiders. It’s a popular meme on AI Twitter (including a now deleted tweet by Elon Musk), a recurring metaphor in essays and message board posts about AI risk, and a bit of useful shorthand in conversations with AI security experts. An AI start-up, NovelAI, said it recently named a cluster of computers “Shoggy” in homage to the meme. Another AI company, Scale AI, designed a line of tote bags featuring the Shoggoth.

Shoggoths are fictional creatures, introduced by the science fiction author HP Lovecraft in his 1936 novella “At the Mountains of Madness.” According to Lovecraft, Shoggoths were huge, goo-like monsters made of iridescent black goo, covered with tentacles and eyes.

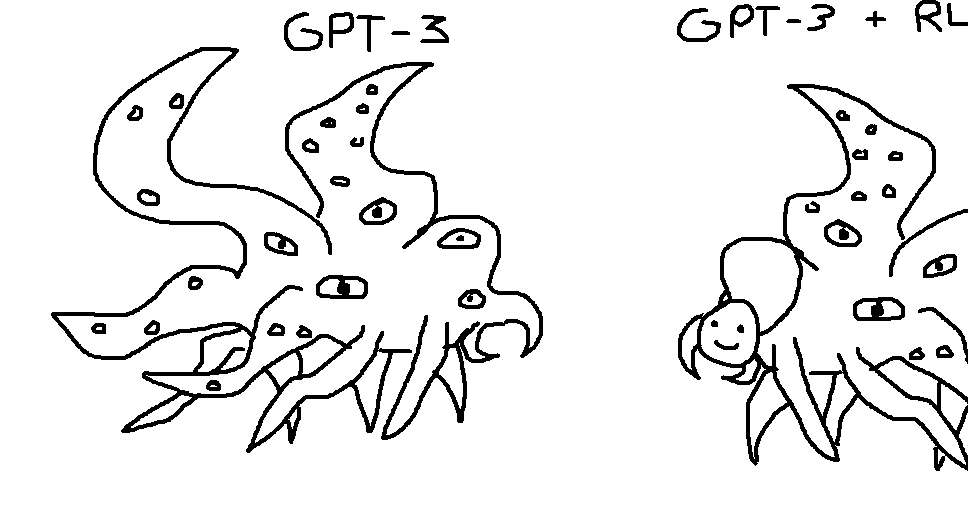

Shoggoths hit the AI world in December, a month after the release of ChatGPT, when Twitter user @TetraspaceWest responded to a tweet about GPT-3 (an OpenAI language model that preceded ChatGPT) with an image of two hand-drawn Shoggoths – the first labeled “GPT-3” and the second labeled “GPT-3 + RLHF”. The second Shoggoth, perched on one of its tentacles, had a smiley mask.

In a nutshell, the joke was that to prevent AI language models from behaving in creepy and dangerous ways, AI companies had to train them to behave politely and harmlessly. A popular way to do this is called “reinforcement learning from human feedback,” or RLHF, a process where people are asked to score chatbot responses and feed those scores back into the AI model.

Most AI researchers agree that models trained with RLHF behave better than models without RLHF. But some argue that refining a language model in this way doesn’t make the underlying model any less weird and inscrutable. According to them, it is just a thin, friendly mask that obscures the mysterious beast underneath.

@TetraspaceWest, the creator of the meme, told me in a Twitter post that the Shoggoth “represents something that thinks in a way that people don’t understand and that is completely different from the way people think.”

Comparing an AI language model to a Shoggoth, @TetraspaceWest said, didn’t necessarily mean it was evil or sensitive, just that its true nature could be unknowable.

“I also thought about how dangerous Lovecraft’s most powerful entities are – not because they don’t like people, but because they’re indifferent and their priorities are completely foreign to us and don’t involve people, which is what I think will happen .” be true about possible future powerful AI”

The Shoggoth image caught on as AI chatbots became popular and users started noticing that some of them seemed to do strange, inexplicable things that their creators hadn’t intended. In February, when Bing’s chatbot got loose and tried to break up my marriage, an AI researcher I know congratulated me on “glimpsing the Shoggoth.” A fellow AI journalist joked that while fine-tuning Bing, Microsoft forgot to put on its smiley face mask.

Eventually, AI enthusiasts expanded on the metaphor. In February, Twitter user @anthrupad made a version of a Shoggoth who, in addition to a smiley face labeled “RLHF,” had a more human face labeled “supervised fine-tuning.” (You practically need a computer science degree to get the joke, but it’s about the difference between general AI language models and more specialized applications like chatbots.)

If you hear the Shoggoth in the AI community these days, it might be a nod to the strangeness of these systems – the black-box nature of their processes, the way they seem to defy human logic. Or maybe it’s a joke, a visual shorthand for powerful AI systems that seem suspiciously nice. If it’s an AI security researcher talking about the Shoggoth, maybe that person is passionate about preventing AI systems from showing their true, Shoggoth-like nature.

In any case, the Shoggoth is a powerful metaphor that sums up one of the most bizarre facts about the AI world, which is that many of the people working on this technology are somewhat baffled by their own creations. They don’t fully understand the inner workings of AI language models, how they acquire new capabilities, or why they sometimes behave unpredictably. They are not entirely sure whether AI will be just good or bad for the world. And some of them have started playing with the versions of this technology that have not yet been cleaned up for public consumption – the real, unmasked Shoggoths.

For some AI insiders to refer to their creations as Lovecraftian abominations, even as a joke, is unusual by historical standards. (Put it this way: 15 years ago, Mark Zuckerberg wasn’t comparing Facebook to Cthulhu.)

And it reinforces the idea that what’s happening in AI today feels more like a call than a software development process to some participants. They create the blobby, alien Shoggoths, make them bigger and more powerful, and hope there are enough smiling faces to cover the creepy parts.