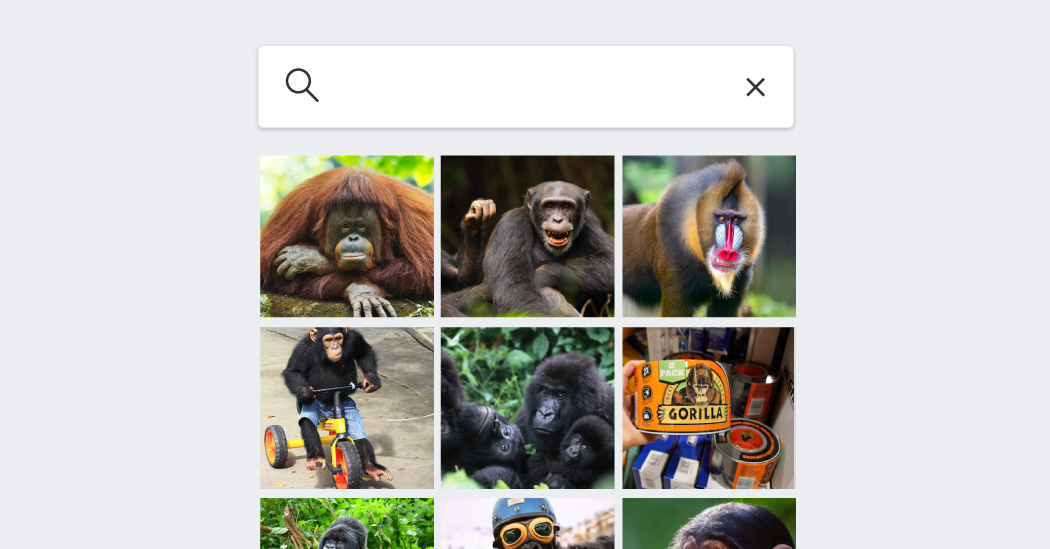

Eight years after a controversy over black people being falsely labeled as gorillas by image analysis software — and despite major advances in computer vision — tech giants are still afraid of repeating the mistake.

When Google released its standalone Photos app in May 2015, people were amazed at what it could do: analyze images to label the people, places, and things in them, an amazing consumer offering at the time. But a few months after its release, a software developer, Jacky Alciné, discovered that Google had labeled photos of him and a friend, who are both black, as “gorillas,” a term that is particularly offensive because it echoes centuries of racist tropes.

In the ensuing controversy, Google prevented its software from categorizing anything in Photos as gorillas and promised to fix the problem. Eight years later, with significant advances in artificial intelligence, we tested whether Google had solved the problem and looked at similar tools from its competitors: Apple, Amazon and Microsoft.

There was one member of the primate family that Google and Apple were able to recognize: lemurs, the permanently startled-looking, long-tailed animals that share opposable thumbs with humans but are more distantly related than apes.

The tools from Google and Apple were clearly the most advanced when it came to image analysis.

Still, Google, whose Android software powers most of the world’s smartphones, has made the decision to disable the ability to visually search for primates for fear of making an offensive mistake and labeling a person as an animal. And Apple, with technology that performed similarly to Google’s in our test, also seemed to disable the ability to search for apes and apes.

Consumers may not need to perform such a search very often, although in 2019 an iPhone user complained on Apple’s customer support forum that the software “can’t find monkeys in photos on my device.” But the problem raises bigger questions about other unfixed or unfixable flaws lurking in services that rely on computer vision – a technology that interprets visual images – as well as other products powered by AI.

Mr. Alciné was dismayed to learn that Google still hasn’t fully resolved the problem, saying that society is placing too much trust in technology.

“I will forever have no faith in this AI,” he said.

Computer vision products are now used for tasks as mundane as sending an alert when a package is at your doorstep, and as arduous as navigating cars and locating perpetrators in law enforcement investigations.

Errors may reflect racist views among those encoding the data. In the gorilla incident, two former Google employees who worked on this technology said the problem was that the company didn’t put enough photos of black people in the image collection it used to train its AI system. As a result, the technology was not familiar enough with dark-skinned people and confused them with gorillas.

As artificial intelligence becomes more embedded in our lives, it raises fears of unintended consequences. While computer vision products and AI chatbots like ChatGPT are different, both rely on underlying amounts of data training the software, and both can fail due to errors in the data or biases built into their code.

Microsoft recently restricted users’ ability to interact with a chatbot built into its search engine, Bing, after it sparked inappropriate conversations.

Microsoft’s decision, like Google’s choice to prevent its algorithm from identifying gorillas altogether, illustrates a common industry approach: shielding technology features that malfunction rather than fixing them.

“Solving these problems is important,” said Vicente Ordóñez, a professor at Rice University who studies computer vision. “How can we trust this software for other scenarios?”

Michael Marconi, a Google spokesperson, said Google had prevented its Photos app from labeling anything ape or ape because it decided the benefit “doesn’t outweigh the risk of harm.”

Apple declined to comment on users’ inability to search for most primates in its app.

Representatives from Amazon and Microsoft said the companies were always looking to improve their products.

Poor visibility

When Google developed its Photos app, which was released eight years ago, it collected a large amount of images to train the AI system to identify people, animals and objects.

His significant oversight — that there weren’t enough photos of black people in the workout data — later caused the app to malfunction, two former Google employees said. The company then failed to expose the “gorilla” issue because it had not asked enough employees to test the feature before its public debut, the former employees said.

Google apologized profusely for the gorilla incident, but it was one of many episodes in the wider tech industry that have led to accusations of bias.

Other products that have been criticized include HP’s facial recognition webcams, which failed to detect some people with dark skin, and the Apple Watch, which, according to a lawsuit, failed to accurately read blood oxygen levels across skin tones. The errors suggested that tech products were not designed for dark-skinned people. (Apple pointed to a 2022 paper detailing its efforts to test its blood oxygen app on a “wide range of skin types and tones.”)

Years after the Google Photos flaw, the company ran into a similar issue with its Nest home security camera during internal testing, according to a person familiar with the incident who worked at Google at the time. The Nest camera, which used AI to determine whether someone was familiar or unfamiliar with a property, some black people mistook for animals. Google rushed to fix the problem before users could access the product, the person said.

However, Nest customers continue to complain on the company’s forums about other flaws. In 2021, a customer received a notification that his mother rang the doorbell, but found his mother-in-law on the other side of the door. When users complained that the system mixed up faces they marked as “familiar,” a customer service representative on the forum advised them to remove all their labels and start over.

Mr. Marconi, the Google spokesperson, said that “our goal is to prevent this kind of error from ever happening.” He added that the company had improved its technology “by collaborating with experts and diversifying our image datasets.”

In 2019, Google attempted to improve a facial recognition feature for Android smartphones by increasing the number of dark-skinned people in its dataset. But the contractors Google hired to collect facial scans reportedly resorted to a disturbing tactic to compensate for that lack of diverse data: They targeted the homeless and students. Google executives called the incident “deeply disturbing” at the time.

The solution?

While Google worked behind the scenes to improve the technology, it never allowed users to judge those efforts.

Margaret Mitchell, a researcher and co-founder of Google’s Ethical AI group, joined the company after the gorilla incident and worked with the Photos team. She said in a recent interview that she supported Google’s decision to “remove the label of gorillas, at least for a while.”

“You have to think about how often someone has to label a gorilla instead of perpetuating harmful stereotypes,” said Dr. Mitchell. “The benefits don’t outweigh the potential harms of getting it wrong.”

Dr. Ordóñez, the professor, speculated that Google and Apple could now tell primates from humans, but they didn’t want to enable the feature given the potential reputational risk if it failed again.

Google has since released a more powerful image analysis product, Google Lens, a web search tool that uses photos instead of text. Wired found in 2018 that the tool also failed to identify a gorilla.

These systems are never foolproof, said Dr. Mitchell, who no longer works at Google. Because billions of people use Google’s services, even rare outages that only happen to one person in a billion users will come to light.

“It only takes one mistake to have huge social consequences,” she said, referring to “the poisoned needle in a haystack.”