Benj Edwards / Ars Technica

Last week, Swiss software engineer Matthias Bühlmann discovered that the popular image synthesis model Stable Diffusion could compress existing bitmap images with fewer visual artifacts than JPEG or WebP at high compression ratios, although there are important caveats.

Stable Diffusion is an AI image synthesis model that typically generates images based on text descriptions (called “prompts”). The AI model learned this ability by studying millions of images from the Internet. During the training process, the model makes statistical associations between pictures and related words, making the most important information about each picture much smaller and stored as ‘weights’. These are mathematical values that represent what the AI image model knows, so to speak.

When Stable Diffusion analyzes and “compresses” images into weight form, they are in what researchers call “latent space”, which is a way of saying that they exist as a kind of fuzzy potential that can be realized in images once they are. decoded . With Stable Diffusion 1.4, the weight file is about 4 GB, but it represents knowledge about hundreds of millions of images.

While most people use Stable Diffusion with text prompts, Bühlmann cut out the text encoding and instead forced his images through Stable Diffusion’s image encoding process, which takes a low-precision 512×512 image and turns it into a latent 64×64 with higher precision space representation. At this point, the image exists on a much smaller data size than the original, but it can still be expanded (decoded) to a 512×512 image with fairly good results.

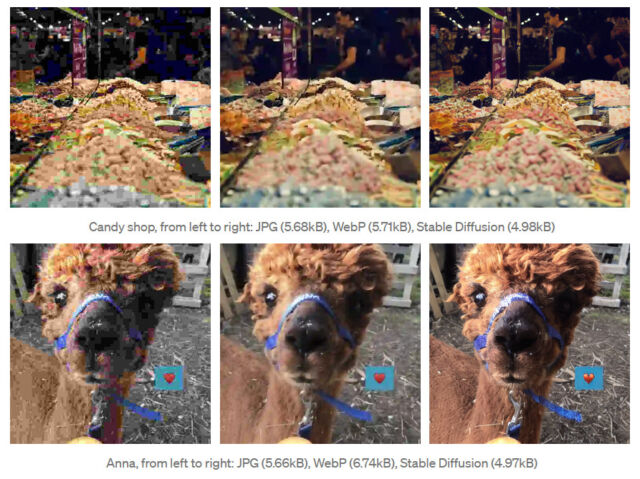

While conducting tests, Bühlmann found that images compressed with Stable Diffusion looked subjectively better at higher compression ratios (smaller file size) than JPEG or WebP. In one example, he shows a candy store photo compressed to 5.68 KB with JPEG, 5.71 KB with WebP, and 4.98 KB with Stable Diffusion. The stable diffusion image appears to have more resolved detail and less obvious compression artifacts than those compressed in the other formats.

However, Bühlmann’s method currently has significant limitations: it doesn’t do well with faces or text, and in some cases it can hallucinate detailed features in the decoded image that weren’t present in the source image. (You probably don’t want your image compressor inventing details in an image that doesn’t exist.) Decoding also requires the file with stable diffusion weights of 4 GB and additional decoding time.

While this use of Stable Diffusion is unconventional and more of a fun hack than a practical solution, it could potentially point to a new future use of image synthesis models. Bühlmann’s code can be found on Google Colab and you can find more technical details about his experiment in his post on Towards AI.